OVERLOOK PRODUCT • GUIDE

Improve AI in real operations.

Guide helps teams validate AI behavior, verify performance, capture expert feedback, and continuously improve systems where they actually operate.

THE PERFORMANCE GAP

Most AI is tested before launch. Real performance happens after launch.

Pre-release testing matters, but production reality introduces new users, workflows, exceptions, and operating pressure. Teams need a way to improve AI continuously after deployment.

01

Static Evaluation

Testing ends before real operations begin.

02

Weak Feedback Loops

Useful issues are not captured systematically.

03

No Proof of Improvement

Leaders cannot see measurable progress over time.

CORE CAPABILITIES

One system for operational AI improvement.

01

Scenario Validation

Test AI behavior against real-world scenarios.

02

Operational Verification

Confirm performance in live environments.

03

Expert Feedback

Capture domain expert guidance.

04

Measurement Workflows

Track outcomes that matter.

05

Continuous Improvement

Use evidence to refine systems over time.

06

Intervention Triggers

Know when action is needed.

HOW IT WORKS

Turn real operations into better AI performance.

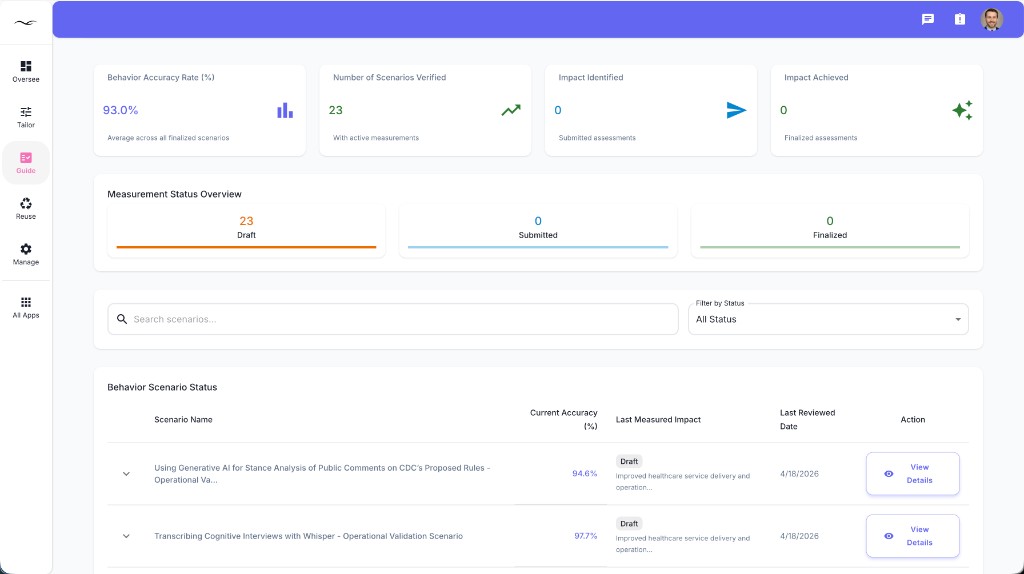

REAL OPERATIONS VIEW

Built for improvement where AI actually works.

- Real scenario validation

- Operational verification signals

- Feedback from domain experts

- Outcome measurement workflows

- Continuous performance learning

WHAT CHANGES

What teams gain with Guide

01

Better AI Performance

Systems improve with real evidence.

02

Faster Learning Cycles

Teams fix issues sooner.

03

Stronger Trust

Leaders see proof, not assumptions.

04

Measurable ROI

Improvement links to business outcomes.

BEYOND STATIC MONITORING

Typical tools watch systems. Guide helps improve them.

| Typical Tools | Guide |

|---|---|

| Passive monitoring | Active improvement workflows |

| Usage metrics only | Scenario + outcome evidence |

| Alert fatigue | Guided intervention timing |

| Isolated feedback | Connected expert input |

| Static reporting | Continuous optimization |

PART OF THE OVERLOOK SYSTEM

Guide becomes stronger inside the full platform.

READY TO IMPROVE

Improve what matters.

Guide helps organizations continuously improve AI performance using validation, verification, feedback, and measurement in real operations.